For plant managers and operations directors overseeing large-scale FDI manufacturing operations, the pressure to improve performance is not simply a matter of running lines faster or scheduling more efficiently. The complexity of modern manufacturing has grown to a point where human decision-making alone cannot keep pace with the data being generated every second across the facility. This is the core problem that an AI-driven factory is designed to solve. Not by replacing the expertise of experienced engineers and plant leaders, but by giving that expertise a real-time, data-grounded foundation to act on.

This article unpacks what an AI-driven factory actually means in practice, how it differs from earlier smart factory investments, where the real implementation challenges lie, and how manufacturing leaders can build a credible roadmap for adoption.

What Is an AI-Driven Factory?

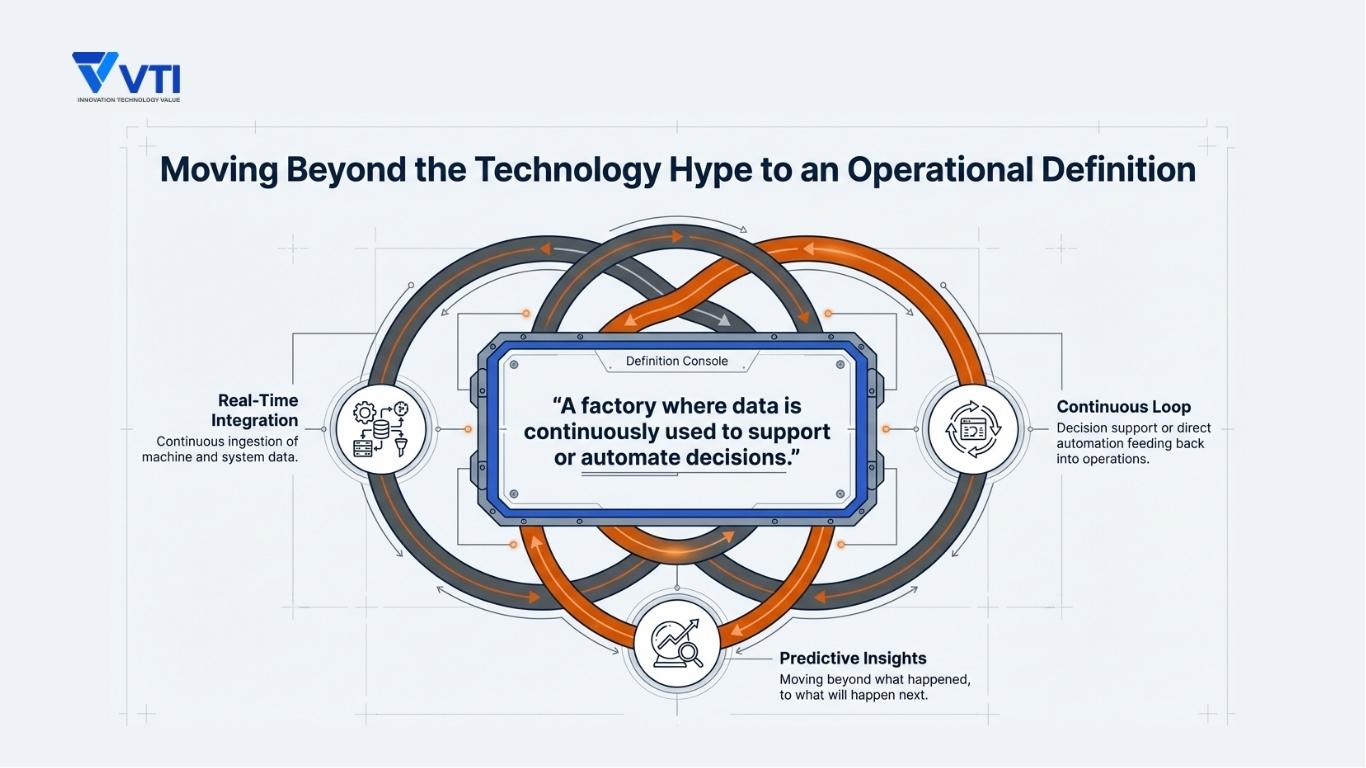

Moving Beyond Technology Definitions

Conversations about AI in manufacturing often get pulled into the wrong direction — toward abstract capability descriptions, vendor demos, or futuristic language that bears little resemblance to actual factory conditions. An AI-driven factory is not a concept defined by which algorithms run in the background or how many sensors are installed per machine bay.

A more useful operational definition is: An AI-driven factory is one where data is continuously collected, structured, and used to support or automate decisions that directly affect production performance. The intelligence is embedded in the daily rhythm of operations. It shows up in:

- A maintenance technician receiving an early-warning alert before a critical asset degrades.

- A production scheduler seeing a real-time recommendation when a line deviation threatens the day’s output.

- Quality managers getting flagged patterns before a batch moves downstream.

The distinction is between data being available and data being acted on.

Core Characteristics of an AI-Driven Factory

While implementations vary by industry, production scale, and maturity level, AI-driven factories tend to share four defining characteristics.

Real-time data integration: Data from machines, production systems, quality checks, and logistics flows into a unified layer continuously.

Predictive insights: Rather than reacting to what has already happened, the system anticipates:

- Which equipment is trending toward failure

- Which process parameter is drifting outside acceptable range

- Which schedule assumption is about to become invalid

Decision support or automation: AI models surface recommendations that reduce the cognitive load on operators, engineers, and managers without removing human accountability from the loop.

Continuous optimization: Over time, the system learns from operational patterns, improves its models, and progressively tightens the feedback loops between measurement and response.

Why This Concept Is Gaining Attention in APAC Manufacturing

The interest in AI-driven factory concepts across APAC has intensified for reasons that are deeply operational due to the growth of manufacturing complexity. Facilities are managing broader product mixes, shorter run cycles, and higher customer expectations around delivery and quality – often within cost structures that do not allow proportional headcount increases.

At the same time, skills and talent constraints still exist. Experienced engineers who understand equipment behavior, process dynamics, and failure patterns are difficult to retain, difficult to recruit, and impossible to clone. There is a growing recognition that critical operational knowledge needs to be systematized before it walks out the door. AI provides a path to do exactly that, by embedding pattern recognition and decision logic into systems rather than relying exclusively on individuals.

For multinational manufacturers managing operations across multiple sites in the region, there is an additional layer of pressure: the need to standardize operating performance, improve headquarters visibility, and reduce the wide variability in how different facilities operate.

From Smart Factory to AI-Driven Factory: What Changes?

Evolution of Manufacturing Systems

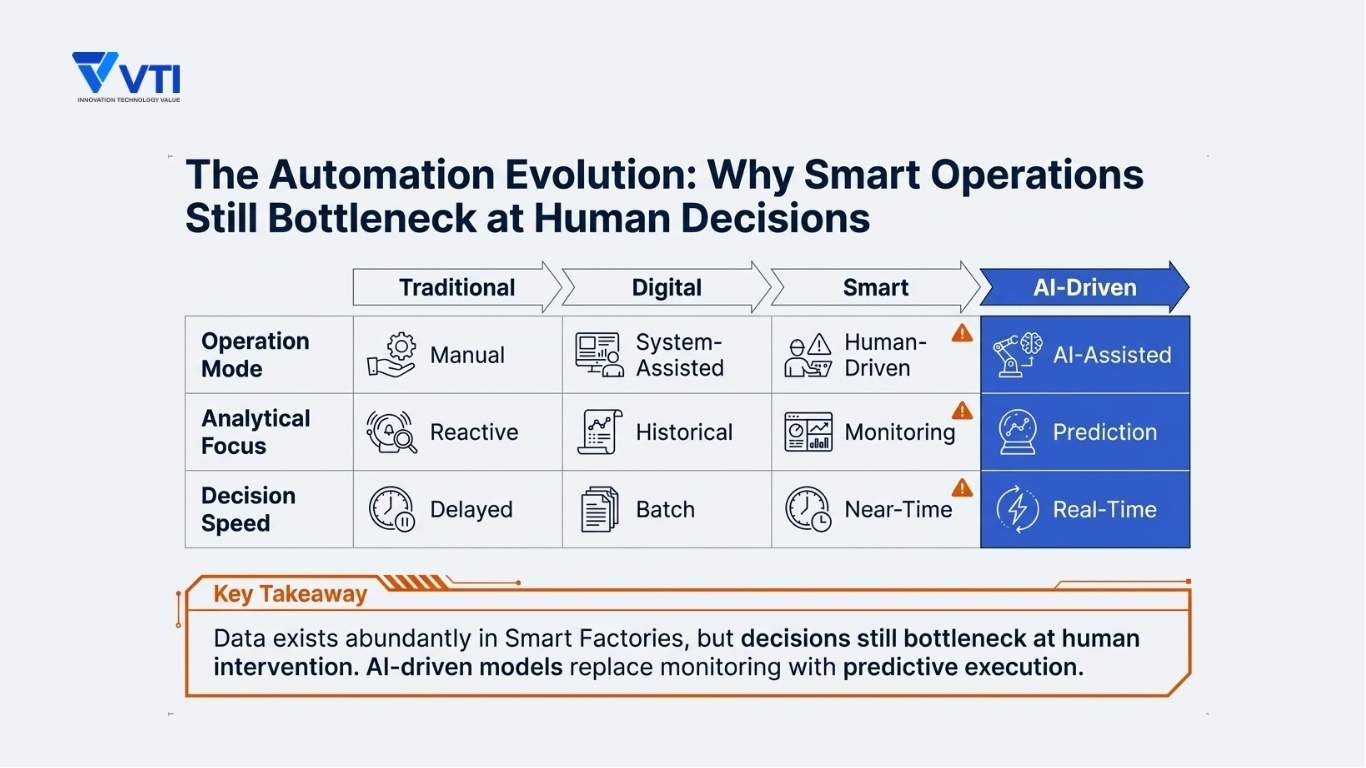

Manufacturing systems have evolved through several distinct phases, each adding a layer of capability without necessarily replacing what came before.

Traditional manufacturing operated on largely manual data collection, paper-based reporting, and decisions that depended heavily on the experience and judgment of individual supervisors. Information moved slowly, and the gap between an event occurring on the shop floor and a decision being made about it could span hours or even days.

The shift toward digital manufacturing introduced automation of data capture — PLCs, SCADA systems, MES platforms — and created the first wave of operational visibility. Data that previously existed only in notebooks or shift handover meetings became structured and retrievable.

The smart factory concept, closely associated with Industry 4.0, went a step further by connecting these systems — machines, MES, ERP, quality systems — and adding real-time dashboards, IoT-based monitoring, and initial analytics capabilities. Smart factory infrastructure created the data foundation that the AI layer now builds upon.

An AI-driven factory represents the next layer: moving from having the data to using it intelligently, continuously, and at scale.

3 Key Differences in Operation

The differences between a smart factory and a fully AI-driven factory are most visible in three dimensions.

- The first is visibility versus decision-making. A smart factory shows what is happening, while an AI-driven factory determines what to do — and in some cases, acts automatically.

For example, an AI model can identify the root cause of an OEE decline and recommend corrective action before a shift manager reviews the dashboard. - The second is monitoring versus prediction. Smart factory systems are largely reactive, alerting when thresholds are breached. Predictive AI extends the time horizon by flagging conditions that are trending toward failure — often before any measurable impact.

This is the difference between being notified of a bearing failure and being warned that its vibration pattern indicates likely failure within the next 72 hours. - The third is human-driven versus AI-assisted operations. In smart factories, decisions still rely on human interpretation and execution. In AI-driven environments, many routine decisions are supported — and in some cases automated — enabling faster, more consistent responses than manual review cycles.

Why Smart Factory Alone May Not Be Enough

Many facilities that have invested in smart factory infrastructure are now facing a familiar issue: the data exists, but meaningful value is not being extracted.

This is a gap between data and decision. Dashboards are reviewed periodically rather than continuously. Alerts are frequent enough to create fatigue. Reports are generated and discussed, but rarely change how operations are run day to day.

Smart factory investments improve visibility, but they do not inherently improve how quickly or consistently decisions are made. Operations still depend on human interpretation, and factors such as cognitive limits, shift structures, and organizational layers introduce delay.

AI addresses this gap by ensuring that the right insights reach the right people at the right time — and by enabling certain high-frequency decisions to be executed automatically, without waiting for manual intervention.

Common Operational Challenges That Drive AI Adoption

Lack of Real-Time Visibility and Data Fragmentation

In most large manufacturing facilities, operational data is inherently fragmented. Machine data resides in SCADA or PLC historians, production data in MES, inventory and orders in ERP, and quality records in QMS — while a significant portion of operational knowledge still lives in spreadsheets maintained by individual teams.

The result is not just data dispersion, but decision friction. Creating a coherent view of operations requires manual consolidation across systems, and by the time that view is assembled, it is already outdated. Decisions are made based on a delayed version of reality.

This is the root issue: without real-time, integrated visibility, the factory cannot be managed in real time.

Reactive Operations and High Decision Latency

Most manufacturing operations are still governed by periodic review cycles — shift handovers, daily meetings, and weekly reports. While necessary, these cadences inherently introduce delay into how problems are detected and addressed.

Failures are identified at breakdown, process deviations at quality checks, and inefficiencies only after performance reports are compiled. This latency accumulates as downtime, rework, scrap, and expedited costs.

AI-driven systems fundamentally compress this delay. Instead of discovering issues after impact, they surface signals as they emerge — reducing the gap between event and response.

Planning and Execution Misalignment

Production plans are built on assumptions — machine availability, material supply, labor, and cycle times — typically fixed at the start of a shift or day. In reality, these conditions change continuously.

The gap between plan and execution creates persistent inefficiency. Equipment underperforms, materials arrive late, or quality issues increase output requirements — yet plans remain static until manually adjusted.

AI-assisted scheduling addresses this by continuously aligning plans with real-time conditions, turning production schedules into dynamic decision tools rather than static documents.

Dependency on Human Expertise

Operational excellence in many factories depends heavily on a small number of highly experienced individuals — technicians, engineers, and supervisors whose knowledge is built over years of practice.

The risk is that this expertise is neither scalable nor transferable. When these individuals leave or change roles, the organization loses part of its operational intelligence.

AI provides a way to institutionalize this knowledge — capturing patterns, failure signatures, and process relationships from historical data so that decision quality does not depend solely on individual experience.

Multi-Plant Standardization Challenges

For multi-site manufacturers, standardization is a persistent challenge. While processes may be similar across plants, each site often develops its own KPI definitions, reporting structures, and operating conventions.

This creates a structural limitation: headquarters cannot reliably compare performance across sites, and cross-site benchmarking becomes a manual, time-consuming effort. Best practices identified in one facility are difficult to replicate because they are not encoded in a consistent, transferable way.

A consistent AI-driven factory architecture addresses this by establishing shared data models and standardized decision logic — enabling true cross-site visibility, benchmarking, and scalability.

Core Architecture of an AI-Driven Factory

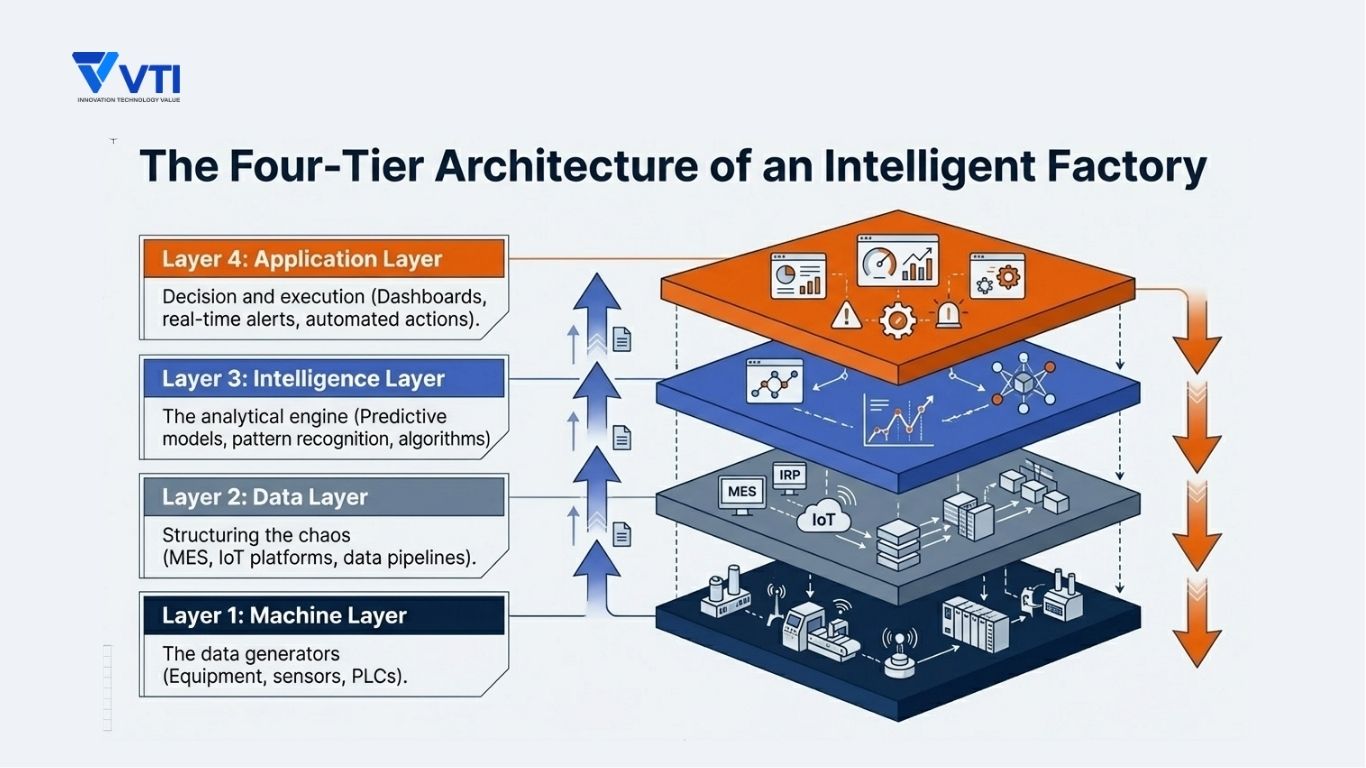

Understanding how an AI-driven factory works operationally requires understanding its architecture at a structural level. There are four distinct layers, and the effectiveness of the whole system depends on how well each layer is built and how cleanly they connect.

Machine Layer: Data Generation

At the foundation of any AI-driven factory is the equipment itself: CNC machines, robotic arms, conveyor systems, injection molding presses, welding stations, packaging lines, environmental controls. These machines generate data continuously — operational state, cycle times, temperatures, pressures, vibration signatures, energy consumption, fault codes.

The quality and granularity of data at this layer varies significantly by equipment generation, manufacturer, and existing connectivity infrastructure. Legacy equipment may require retrofitting with external sensors. Newer machines often expose data through OPC-UA or other standardized industrial protocols. PLCs and SCADA systems sit at this layer, capturing and buffering real-time signals from the production environment.

The machine layer is where the raw material of operational intelligence originates. Its completeness and reliability directly constrain what the layers above can produce.

Data Layer: Integration and Structuring

Raw machine signals are not yet usable by AI models. Before they carry analytical value, they need to be collected, normalized, contextualized, and structured. This is the function of the data layer: connecting data sources, establishing consistent naming conventions and taxonomies, handling different data types and frequencies, and creating reliable pipelines that feed structured information into the intelligence layer.

This layer typically involves MES platforms that contextualize machine data against production orders, Industrial IoT platforms that aggregate and normalize sensor data, and data engineering infrastructure — whether cloud-based, on-premise, or hybrid — that manages storage, transformation, and access.

The data layer is where most AI implementations encounter their first serious difficulty. It is not glamorous infrastructure, but without it, even sophisticated AI models will produce unreliable outputs.

Intelligence Layer: AI and Analytics

The intelligence layer is where analytical and AI capabilities operate on the structured data flowing from below. This layer contains the models, algorithms, and analytical logic that translate operational data into insight and action.

This includes predictive maintenance models that monitor equipment health signals and project failure probability. It includes:

- machine learning models trained on historical production and quality data to detect process drift or predict yield outcomes.

- optimization algorithms that evaluate scheduling options against multiple constraints and recommend the highest-performance sequence.

- anomaly detection systems that continuously scan for patterns that deviate from established operational baselines.

The sophistication of this layer should be calibrated to the actual use cases the facility is targeting and to the quality of the data layer beneath it. Complex models built on inconsistent data produce unreliable outputs that erode trust and ultimately get ignored. Starting with analytically simpler models on clean, well-structured data consistently delivers more operational value than starting with sophisticated models on poor data.

Application Layer: Decision and Execution

The intelligence layer has no value without a mechanism for getting its outputs to the people or systems that can act on them. The application layer serves this function: dashboards that surface KPIs and alerts in a format appropriate for each user role, notification systems that push warnings and recommendations to operators or engineers at the right moment, integration with execution systems that allows AI-generated recommendations to trigger actions in MES or maintenance management platforms.

This layer is where the human experience of an AI-driven factory is shaped. A predictive maintenance alert that fires to a maintenance engineer’s mobile device at the right specificity and the right time changes behavior. A dashboard that aggregates forty metrics without prioritization does not.

How These Layers Work Together in Practice

The operational logic of an AI-driven factory becomes clearest when traced end-to-end.

Consider a typical predictive maintenance scenario:

A CNC machining center equipped with vibration and temperature sensors continuously sends signals to the SCADA historian (machine layer). Those signals are ingested by an IoT platform, normalized against equipment master data, and structured into time-series datasets (data layer). A predictive model monitors the combined vibration-temperature signature and detects that the pattern is trending toward a signature historically associated with spindle bearing degradation (intelligence layer). A maintenance alert is pushed to the responsible technician’s workstation and logged in the CMMS as a work order for planned inspection within the next 12 hours (application layer).

The spindle does not fail unexpectedly. The line does not go down. A maintenance event that would have caused several hours of unplanned downtime becomes a 45-minute planned inspection at a scheduled interval.

This sequence — machine data to AI model to anomaly detection to action — is the fundamental operating logic of an AI-driven factory. Replicate it across multiple asset classes, quality checkpoints, and scheduling decisions, and the cumulative operational impact becomes substantial.

Key Use Cases of AI-Driven Factories

Predictive Maintenance

Unplanned equipment downtime remains one of the most expensive operational problems in manufacturing. According to research cited by Deloitte, unplanned downtime can cost industrial manufacturers an estimated several hundred thousand dollars per hour depending on the production environment. The financial case for predictive maintenance is therefore not marginal — it is central to OEE performance.

Predictive maintenance uses continuous monitoring of equipment health signals — vibration, temperature, pressure, acoustic emission, power consumption — combined with models trained on historical failure data to project the probability of failure before it occurs. Early warning signals that would not be perceptible to human operators become visible to the model weeks in advance, enabling maintenance to be planned during scheduled downtime windows rather than responding to emergency breakdowns.

The practical value is not just cost avoidance. It is the ability to shift the maintenance organization from a reactive firefighting posture to a planned, condition-based operating model — one that is less stressful, more efficient, and more compatible with production scheduling.

AI-Based Quality Inspection

Manual visual inspection is inherently variable. Different inspectors apply slightly different standards. Fatigue affects judgment over long shifts. High-speed lines generate more units than human inspectors can evaluate thoroughly. The result is a quality control function that is resource-intensive, inconsistent, and not reliably scalable.

AI-based vision inspection systems — using cameras, lighting, and trained image recognition models — evaluate products at line speed without fatigue or variability. They can detect surface defects, dimensional deviations, assembly errors, and marking issues at accuracy levels that consistently exceed manual inspection performance on high-volume, repetitive tasks. In industries where quality escapes carry significant warranty or recall costs, this capability delivers direct financial protection.

Beyond detection, AI quality systems generate structured defect data that feeds back into process analysis: identifying which upstream parameters correlate with which defect types, and enabling process corrections that reduce defect generation at the source rather than filtering it at the inspection gate.

Production Scheduling Optimization

Most production scheduling tools optimize for a snapshot in time — taking inputs as of the planning horizon and generating a sequence that meets constraints as they exist at that moment. When actual conditions deviate from the plan, the schedule becomes stale, and rescheduling requires manual intervention.

AI-assisted scheduling operates on a continuous optimization logic: monitoring actual production conditions — machine availability, material inventory, quality yield rates, order priorities — and dynamically updating recommended sequences as conditions change. The result is a production plan that reflects reality rather than drifting progressively further from it as the shift progresses.

For facilities managing complex product mixes or high-volume repetitive production with frequent changeovers, the throughput and on-time delivery impact of this capability can be significant.

Anomaly Detection in Operations

Individual process parameters have defined acceptable ranges. But process deviations that lead to quality problems or equipment stress often manifest as subtle combinations of parameters — no single reading is technically out of spec, but the combination represents an abnormal operating state. Human operators monitoring individual metrics cannot reliably detect these multivariate patterns.

AI anomaly detection models learn what normal operations look like across multiple correlated parameters simultaneously and flag deviations from that baseline with a specificity that single-metric monitoring cannot achieve. This capability is particularly valuable in process-intensive manufacturing — chemicals, semiconductors, food production, precision machining — where the relationship between process inputs and output quality is complex.

Energy and Resource Optimization

Energy represents a significant and often underoptimized cost in manufacturing operations. The challenge is that energy consumption patterns are complex — they vary by production volume, product mix, equipment condition, ambient conditions, and operational choices — and the feedback loops between operating decisions and energy cost are too long and indirect for human managers to optimize manually.

AI models trained on energy consumption data, production parameters, and equipment data can identify consumption patterns that indicate inefficiency, recommend operational adjustments that reduce energy intensity without affecting throughput, and in some cases, automate demand-side management decisions that reduce peak consumption costs. For large facilities in markets with significant electricity costs or carbon reduction commitments, the financial and compliance impact of this capability is meaningful.

Business Impact: What Results Can Be Expected?

It is important to be direct about business impact without manufacturing artificial precision. The results achieved in any specific implementation depend on the starting maturity level, the quality of implementation, the use cases prioritized, and the organizational capability to act on AI recommendations. Ranges and directional impact are more honest than fixed benchmarks.

Improved OEE (Overall Equipment Effectiveness)

OEE improvement is typically the primary financial driver of AI-driven factory investment. Facilities that begin at OEE levels in the 60–70% range — common in mid-complexity manufacturing environments — have realistically achieved incremental improvements of 5–15 percentage points through systematic deployment of predictive maintenance, scheduling optimization, and quality analytics. The incremental value of each OEE point depends on the production economics, but in high-throughput environments, the financial impact is substantial.

Reduced Downtime and Faster Response

The most direct impact of predictive maintenance is a measurable reduction in unplanned downtime. The magnitude varies by asset criticality, starting maintenance maturity, and implementation quality, but a meaningful reduction in unplanned stops — and a corresponding increase in the proportion of maintenance work that is planned rather than reactive — is a consistent outcome in well-executed deployments. Response time when issues do occur also improves, because AI systems surface problems with diagnostic context rather than requiring technicians to investigate from scratch.

Increased Yield and Quality Consistency

AI-based quality inspection and process analytics contribute to improved yield rates by catching defects earlier, identifying upstream process causes more systematically, and reducing the variability that produces inconsistent output. Consistency across shifts and operators improves because the decision logic is embedded in the system rather than dependent on individual judgment calls.

Enhanced Labor Productivity

AI does not eliminate the need for manufacturing labor — it changes the nature of what that labor does. When operators, technicians, and engineers are relieved of the most routine monitoring and data-gathering tasks, they can focus cognitive bandwidth on higher-value activities: exception management, continuous improvement, process development, and decision-making that genuinely requires human judgment. Facilities report meaningful productivity improvement not from headcount reduction alone but from higher-value utilization of existing workforce capacity.

Better Decision Speed and Accuracy

Perhaps the least quantifiable but strategically most important impact is the improvement in decision quality across the organization. When decisions are informed by real-time data and AI-generated recommendations rather than periodic reporting and individual memory, the organization systematically makes better calls — faster, more consistently, and with less dependence on specific individuals being available at the right moment. This is particularly important in high-complexity or high-stakes environments where decision errors carry significant cost.

How to Implement an AI-Driven Factory: A Practical Roadmap

The most common mistake organizations make in AI adoption is treating it as a technology deployment project rather than an operational transformation program. The technology is the enabling layer — but the outcomes depend on how well the foundational work is done before advanced capabilities are introduced.

Phase 1: Data Foundation and Standardization

No AI-driven factory initiative succeeds without clean, consistent, accessible data. This phase is about establishing the infrastructure and governance that makes data trustworthy and usable.

This means auditing existing data sources — understanding what is being captured, at what frequency, with what accuracy, and with what gaps. It means connecting machines to collection infrastructure, whether through native protocols on modern equipment or through retrofit sensors on legacy assets. It means establishing data governance: ownership, naming conventions, quality standards, and validation processes.

Critically, this phase also requires defining what decisions the AI is intended to support — because that definition shapes what data needs to be captured, structured, and retained. Without clarity on the decision use cases, data foundation work tends to become directionless and expensive.

Phase 2: Real-Time Visibility

With a data foundation in place, the next phase focuses on making that data accessible and interpretable in real time. This typically involves deploying production dashboards that provide a live view of key operational metrics — OEE by line, equipment status, quality pass rates, production progress against plan — in a format that is genuinely usable by the people who need to act on it.

The goal of this phase is a single source of operational truth: eliminating the parallel tracking systems, manual report compilation, and informational fragmentation that characterize most manufacturing operations before systematic digitalization. This visible, shared picture of operational reality is also the prerequisite for any meaningful cross-functional discussion or shift handover — it replaces “what do you think is happening” with “here is what is actually happening.”

Phase 3: Analytics and Insights

Once real-time visibility is established, the analytical layer can begin extracting patterns and root causes from the data history that accumulates. This phase involves building the analytical capability to identify which process parameters correlate with quality outcomes, which equipment failure modes follow recognizable signature patterns, which scheduling decisions tend to produce throughput losses, and which operational conditions are associated with higher-than-normal energy consumption.

This is where the organization develops genuine analytical fluency — engineers and managers begin using data not just for reporting but for structured problem-solving. Root cause analysis becomes data-driven rather than opinion-driven. Improvement initiatives are scoped and prioritized based on quantified impact rather than intuition.

Phase 4: AI-Driven Optimization

With clean data, real-time visibility, and analytical fluency established, the organization is ready to deploy predictive and optimization models that operate continuously and at scale. This phase introduces predictive maintenance models, AI-based quality inspection, dynamic scheduling assistance, and energy optimization — the capabilities that define a genuinely AI-driven factory.

Critically, deployment in this phase should be incremental. Start with the use cases where the business case is clearest and the data quality is highest. Build operating experience and organizational trust with AI outputs before expanding coverage. Design the human-in-the-loop logic carefully: define clearly which AI recommendations require human confirmation before action, and which can be executed automatically without review.

Where Most Companies Struggle

Two failure patterns are most commonly observed in AI manufacturing initiatives.

The first is jumping to AI without data readiness. Organizations that invest in sophisticated AI models before establishing reliable, consistent data pipelines find themselves with models that produce unreliable outputs — and an organization that quickly loses confidence in AI-generated insights. The technology gets blamed for a failure that was fundamentally a data and foundation problem.

The second is underestimating integration complexity. Connecting AI outputs to production systems — getting a predictive maintenance alert into the CMMS, getting a scheduling recommendation into the MES — requires careful integration work that is often more complex and time-consuming than anticipated. Without that integration, AI insights remain advisory documents reviewed in meetings rather than operational inputs that change what happens on the factory floor.

Challenges and Considerations in AI Adoption

Data Quality and Availability

Data quality problems do not reveal themselves during a demo or a proof-of-concept phase. They surface when a model is deployed at production scale and begins producing outputs that do not reflect operational reality. The gap between available data and model-ready data is typically larger than expected — requiring significant cleaning, normalization, labeling, and enrichment work before training and deployment can begin.

Legacy Systems and Integration Constraints

Most large manufacturing facilities are running a mix of system generations — modern MES platforms alongside equipment from the 1990s, cloud analytics tools alongside on-premise SCADA historians, new IoT infrastructure alongside legacy PLC networks. Connecting these layers cleanly and reliably requires engineering judgment, not just platform installation. Integration complexity is a real, persistent challenge that needs to be scoped honestly at the outset.

Organizational Change and Adoption

Technology deployment is the easier part of AI transformation. The harder part is changing how people work. Operators who have relied on experience and intuition for years are not automatically going to trust AI-generated recommendations — particularly early in deployment, when the models are still maturing. Building trust requires transparency about how the AI works, visible examples of recommendations that proved correct, and clear escalation paths when operators disagree with AI output. Change management is a technical requirement for achieving adoption.

Skill Gaps and Training Needs

Deploying an AI-driven factory creates skill demands that most facilities are not currently equipped to meet internally. Data engineers, AI/ML specialists, integration architects, and change management practitioners with manufacturing domain knowledge are all needed — and in short supply. Organizations that attempt to build all of this capability internally from scratch face timeline and cost risks. Partnerships with implementation specialists who bring both the technical expertise and the manufacturing domain knowledge are often the more practical path.

Managing Expectations and ROI

AI projects often generate high initial expectations that are not calibrated to implementation reality. The business case for AI in manufacturing is real and substantive — but it materializes over months and years of incremental deployment, not immediately after go-live. Setting realistic timelines, defining success metrics at each phase, and building a portfolio of use cases with varying time horizons (quick wins alongside longer-term strategic capabilities) is essential for maintaining leadership commitment through the full implementation lifecycle.

AI-Driven Factory in Multi-Plant Operations

Standardizing Operations Across Sites

For manufacturers managing multiple production facilities, the architectural decisions made in AI deployment have long-term consequences for the ability to compare, benchmark, and improve performance across the network. This requires deliberate choices about data standards before deployment begins.

A common KPI framework — with consistent definitions for OEE components, quality metrics, downtime categorization, and inventory measures — must be established as a precondition for meaningful cross-site analysis. Shared data models ensure that data from different sites is comparable, not just nominally using the same terms for different underlying measurements. This standardization work is unglamorous but strategically important: without it, multi-plant AI deployment produces a collection of isolated site-level tools rather than a genuinely networked intelligence capability.

Improving HQ Visibility and Governance

One of the most immediate business cases for AI-driven factory implementation in a multi-site context is the improvement in headquarters visibility. Currently, most regional and corporate operations teams are working from lagging reports — compiled manually at site level, aggregated with inconsistency, and reviewed in periodic governance meetings that are always describing the past.

A well-implemented AI layer provides real-time operational visibility across the full site network: which facilities are tracking below target, which equipment health trends are emerging as risk factors, which sites are achieving best-in-class performance and on which metrics. This visibility transforms how regional and corporate leadership can manage the manufacturing network — from periodic review to continuous monitoring, and from broad assessment to targeted intervention.

Reducing Variability Across Factories

The most operationally powerful long-term benefit of a networked AI-driven factory model is the ability to systematically reduce variability across sites. When the decision logic that produces strong performance at one facility is embedded in a shared AI model rather than residing in the knowledge of site-specific individuals, it becomes replicable. Scheduling logic that has been proven effective at Site A can be applied at Site B. Process control parameters that yield consistently high quality at one facility become the recommended baseline for others.

This is the difference between best-practice sharing as a management activity — which is inherently slow, inconsistent, and person-dependent — and best-practice replication as a system function. For manufacturers serious about network-level operational excellence, this capability is a structural competitive advantage.

Is Your Factory Ready for AI?

Before committing to an AI-driven factory program, leadership teams should honestly assess readiness across four dimensions. This assessment should not be treated as a barrier to beginning — many organizations start with meaningful capability gaps — but it shapes the sequencing and scope of Phase 1 work.

Data Readiness

Are your production machines connected to data collection infrastructure, or are key assets still running blind? Is operational data stored in a format that is accessible for analysis, or is it fragmented across disconnected historians, spreadsheets, and paper records? Do you have confidence in the consistency and accuracy of the data you currently have, or do different people produce different numbers from the same underlying operations?

Organizations where the answer to these questions reveals significant gaps should plan for substantial Phase 1 investment before expecting analytical or AI outcomes.

System Readiness

Is a Manufacturing Execution System (MES) in place and actively used as the production record of truth? Is IoT infrastructure capable of capturing data at the frequency and granularity required for the intended AI use cases? Are integration pathways between systems documented and maintained, or do they exist as informal workarounds? System readiness determines how much of Phase 1 infrastructure build is required before AI deployment can begin.

Process Maturity

AI works best when it is optimizing processes that are already reasonably well-defined and consistently executed. Highly variable, un-standardized processes are difficult to model reliably — the AI has trouble learning “what good looks like” when good is defined differently across shifts, lines, or sites. Process standardization is not a prerequisite for starting, but it significantly affects the accuracy and reliability of AI outputs.

Organizational Readiness

Does leadership have aligned expectations about the timeline and investment required for AI deployment? Is there a sponsor with sufficient authority and sustained attention to drive a multi-phase transformation? Is the organization’s operational culture compatible with data-driven decision-making, or are decisions currently made predominantly based on hierarchy and experience rather than data?

Organizational readiness is often the most important and most underestimated dimension of AI implementation preparedness. Technology can be deployed; organizational alignment needs to be built deliberately.

Frequently Asked Questions (FAQs)

What is an AI-driven factory?

An AI-driven factory is a manufacturing facility where data generated by equipment, processes, and systems is continuously collected, analyzed, and used to support or automate operational decisions.

How is it different from a smart factory?

A smart factory primarily focuses on connectivity and visibility — connecting machines, creating dashboards, and giving operators access to real-time operational data. An AI-driven factory goes further by adding an intelligence layer that analyzes that data, identifies patterns, generates predictions, and provides decision support or automation.

Do you need MES to implement AI in manufacturing?

An MES is not strictly required, but it significantly simplifies AI implementation in production environments. MES systems provide the contextualization layer that transforms raw machine signals into production-meaningful data — linking equipment events to production orders, products, and shifts.

How long does it take to implement AI in a factory?

Realistic timelines vary based on starting maturity, factory complexity, and the scope of use cases targeted. For organizations starting from a limited data foundation, Phase 1 data infrastructure and connectivity work typically requires 3–6 months. Real-time visibility capabilities can often be deployed within 6–12 months of starting. Mature AI capabilities — predictive maintenance, quality analytics, scheduling optimization — begin delivering consistent value at 12–24 months.

What are the main risks of AI adoption?

The most common risks are poor data quality undermining model reliability, integration complexity exceeding planned timelines and budgets, organizational resistance to AI-generated recommendations reducing adoption and therefore realized value, and expectations being set at a level that cannot be sustained through the implementation period. These risks are manageable with proper planning — but they require explicit mitigation, not the assumption that they will resolve themselves.

What kind of ROI can be expected?

ROI varies significantly by implementation scope, starting maturity, and operational context. Organizations that have deployed AI-driven manufacturing capabilities consistently report directional benefits in the form of OEE improvement, reduced unplanned downtime, improved quality yield, and reduced energy consumption.

Conclusion: From Data Visibility to Operational Intelligence

The shift from smart factory to AI-driven factory is fundamentally a shift in how manufacturing operations use data — moving from information that is available to intelligence that is continuously applied.

For manufacturing leaders assessing where to begin: the most important first question is not which AI use case to deploy. It is whether your current data foundation is ready to support the AI models you want to run. Answer that question honestly, and the implementation roadmap becomes considerably clearer.

VTI works with manufacturing organizations across APAC to design and implement AI-driven factory solutions — from data foundation and MES deployment through advanced analytics and predictive operations. If you’re evaluating where your facility stands and what a practical improvement roadmap looks like, our team is available to work through that assessment with you.

![[FREE EBOOK] Strategic Vietnam IT Outsourcing: Optimizing Cost and Workforce Efficiency](https://vti.com.vn/wp-content/uploads/2023/08/cover-mockup_ebook-it-outsourcing-20230331111004-ynxdn-1.png)